Much of the British right occupies itself with complaining about the dismal state of things. This does not lack all merit, but everyone with a functioning mind should understand by now that highlighting the ‘hypocrisy’ of political opponents or bleating about the latest manifestations of madness will change nothing. Then there are those of a more enterprising sort, predominantly North Americans, who turn political frustrations into a business opportunity by selling products solely on the basis that they are not from whichever socially liberal company riled them up. This also achieves very little in the grand scheme of things and portrays a right that is incapable of articulating an independent vision of the world.

Now we have an alternative in The Exhibition, an opening salvo from a group of artists who desire a culture which energises and inspires once again. Here was no place for coordinated agitprop, self-loathing or any of the other trends which make contemporary art so entropic and tiresome. Instead, the walls and pedestals of the Fitzrovia Gallery were adorned with a tangible yet heretofore seemingly unobtainable motivation towards creation.

Am I overplaying its significance? That is partially a question for posterity, yet even for laymen the momentum and excitement these artists are generating is undeniable. The art on display was eclectic in styles, themes and mediums across several dozen pieces. More importantly, however, it was fundamentally good art made by individuals who clearly have a passion for their craft. The nature of this act is political in its affront to progressive sensibilities, but the artists’ avoidance of explicitly political works served their aim of aesthetic appeal. The Exhibition was not a petty episode of ‘culture warring’, but something beyond it with a burgeoning artistic language reemphasising power, virtue and beauty within the human condition. In this sense, modernist inspirations could cooperate with more traditional styles without too much friction, and perhaps the breadth of traditions available to artists in the present can allow synthesis without imitation. I know too little about art to determine the originality of what was on display compared to historical forms, but it was nonetheless impressive to see.

Beyond my emphatic recommendation, I shall mention a few features of The Exhibition which stood out during my visit for those unable to attend; accompanying images can be found fairly easily on the artists’ Twitter feeds. It would be amiss to not mention our very own Sam Wild’s contributions. Amongst his works were a couple of our magazine covers, which are vivid watercolours in actuality. Three textiles by Ferro were a surprising but worthwhile inclusion, according to the website in the Arts and Crafts tradition yet with uniquely mystical patterns. The larger paintings, belonging to Alexander Adams, Matthew Fall McKenzie and Harald Markram, provided yet another advantage to holding this exhibition in that viewing art online seldom gives a sense for each piece’s scale. The epic scenes depicted on several larger canvasses by McKenzie and Markram were simply fantastic. Indeed, all the art on show had more impact from being proudly arranged in a gallery than could be obtained in front of a screen.

I hope this will be the first act in a more active reaction from these artists against cultural stagnation and decline. From my conversations with the artists present during my visit, they are certainly willing to continue fighting for culture. It shall be up to readers and fellow writers to continue supporting this (and other worthy endeavours) in the absence of friendly institutions or the wealthy patrons of times past. At least it has now been proven that our aspirations for the future of culture have the ability to become reality.

Enjoying The Mallard? Consider subscribing to our monthly magazine.

You Might also like

-

Charles’ Personal Rule: A Stable or Tyrannised England?

Within discussions of England’s political history, the most famous moments are known and widely discussed – the Magna Carta of 1215, and the Cromwell Protectorate of the 1650s spring immediately to mind. However, the renewal of an almost-mediaeval style of monarchical absolutism, in the 1630s, has proven both overlooked and underappreciated as a period of historical interest. Indeed, Charles I’s rule without Parliament has faced an identity crisis amongst more recent historians – was it a period of stability or tyranny for the English people?

If we are to consider the Personal Rule as a period in enough depth, the years leading up to the dissolution of Charles’ Third Parliament (in 1629) must first be understood. Succeeding his father James I in 1625, Charles’ personal style and vision of monarchy would prove to be incompatible with the expectations of his Parliaments. Having enjoyed a strained but respectful relationship with James, MPs would come to question Charles’ authority and choice of advisors in the coming years. Indeed, it was Charles’ stubborn adherence to the Divine Right of King’s doctrine, writing once that “Princes are not bound to give account of their actions but to God alone”, that meant that he believed compromise to be defeat, and any pushback against him to be a sign of disloyalty.

Constitutional tensions between King and Parliament proved the most contentious of all issues, especially regarding the King’s role in taxation. At war with Spain between 1625 – 1630 (and having just dissolved the 1626 Parliament), Charles was lacking in funds. Thus, he turned to non-parliamentary forms of revenue, notably the Forced Loan (1627) – declaring a ‘national emergency’, Charles demanded that his subjects all make a gift of money to the Crown. Whilst theoretically optional, those who refused to pay were often imprisoned; a notable example would be the Five Knights’ Case, in which five knights were imprisoned for refusing to pay (with the court ruling in Charles’ favour). This would eventually culminate in Charles’ signing of the Petition of Right (1628), which protected the people from non-Parliamentary taxation, as well as other controversial powers that Charles chose to exercise, such as arrest without charge, martial law, and the billeting of troops.

The role played by George Villiers, the Duke of Buckingham, was also another major factor that contributed to Charles’ eventual dissolution of Parliaments in 1629. Having dominated the court of Charles’ father, Buckingham came to enjoy a similar level of unrivalled influence over Charles as his de facto Foreign Minister. It was, however, in his position as Lord High Admiral, that he further worsened Charles’ already-negative view of Parliament. Responsible for both major foreign policy disasters of Charles’ early reign (Cadiz in 1625, and La Rochelle in 1627, both of which achieved nothing and killed 5 to 10,000 men), he was deemed by the MP Edward Coke to be “the cause of all our miseries”. The duke’s influence over Charles’ religious views also proved highly controversial – at a time when anti-Calvinism was rising, with critics such as Richard Montague and his pamphlets, Buckingham encouraged the King to continue his support of the leading anti-Calvinist of the time, William Laud, at the York House Conference in 1626.

Heavily dependent on the counsel of Villiers until his assassination in 1628, it was in fact, Parliament’s threat to impeach the Duke, that encouraged Charles to agree to the Petition of Right. Fundamentally, Buckingham’s poor decision-making, in the end, meant serious criticism from MPs, and a King who believed this criticism to be Parliament overstepping the mark and questioning his choice of personnel.

Fundamentally by 1629, Charles viewed Parliament as a method of restricting his God-given powers, one that had attacked his decisions, provided him with essentially no subsidies, and forced him to accept the Petition of Right. Writing years later in 1635, the King claimed that he would do “anything to avoid having another Parliament”. Amongst historians, the significance of this final dissolution is fiercely debated: some, such as Angela Anderson, don’t see the move as unusual; there were 7 years for example, between two of James’ Parliaments, 1614 and 1621 – at this point in English history, “Parliaments were not an essential part of daily government”. On the other hand, figures like Jonathan Scott viewed the principle of governing without Parliament officially as new – indeed, the decision was made official by a royal proclamation.

Now free of Parliamentary constraints, the first major issue Charles faced was his lack of funds. Lacking the usual taxation method and in desperate need of upgrading the English navy, the King revived ancient taxes and levies, the most notable being Ship Money. Originally a tax levied on coastal towns during wartime (to fund the building of fleets), Charles extended it to inland counties in 1635 and made it an annual tax in 1636. This inclusion of inland towns was construed as a new tax without parliamentary authorisation. For the nobility, Charles revived the Forest Laws (demanding landowners produce the deeds to their lands), as well as fines for breaching building regulations.

The public response to these new fiscal expedients was one of broad annoyance, but general compliance. Indeed, between 1634 and 1638, 90% of the expected Ship Money revenue was collected, providing the King with over £1m in annual revenue by 1637. Despite this, the Earl of Warwick questioned its legality, and the clerical leadership referred to all of Charles’ tactics as “cruel, unjust and tyrannical taxes upon his subjects”.However, the most notable case of opposition to Ship Money was the John Hampden case in 1637. A gentleman who refused to pay, Hampden argued that England wasn’t at war and that Ship Money writs gave subjects seven months to pay, enough time for Charles to call a new Parliament. Despite the Crown winning the case, it inspired greater widespread opposition to Ship Money, such as the 1639-40 ‘tax revolt’, involving non-cooperation from both citizens and tax officials. Opposing this view, however, stands Sharpe, who claimed that “before 1637, there is little evidence at least, that its [Ship Money’s] legality was widely questioned, and some suggestion that it was becoming more accepted”.

In terms of his religious views, both personally and his wider visions for the country, Charles had been an open supporter of Arminianism from as early as the mid-1620s – a movement within Protestantism that staunchly rejected the Calvinist teaching of predestination. As a result, the sweeping changes to English worship and Church government that the Personal Rule would oversee were unsurprisingly extremely controversial amongst his Calvinist subjects, in all areas of the kingdom. In considering Charles’ religious aims and their consequences, we must focus on the impact of one man, in particular, William Laud. Having given a sermon at the opening of Charles’ first Parliament in 1625, Laud spent the next near-decade climbing the ranks of the ecclesiastical ladder; he was made Bishop of Bath and Wells in 1626, of London in 1629, and eventually Archbishop of Canterbury in 1633. Now 60 years old, Laud was unwilling to compromise any of his planned reforms to the Church.

The overarching theme of Laudian reforms was ‘the Beauty of Holiness’, which had the aim of making churches beautiful and almost lavish places of worship (Calvinist churches, by contrast, were mostly plain, to not detract from worship). This was achieved through the restoration of stained-glass windows, statues, and carvings. Additionally, railings were added around altars, and priests began wearing vestments and bowing at the name of Jesus. However, the most controversial change to the church interior proved to be the communion table, which was moved from the middle of the room to by the wall at the East end, which was “seen to be utterly offensive by most English Protestants as, along with Laudian ceremonialism generally, it represented a substantial step towards Catholicism. The whole programme was seen as a popish plot”.

Under Laud, the power and influence wielded by the Church also increased significantly – a clear example would be the fact that Church courts were granted greater autonomy. Additionally, Church leaders became evermore present as ministers and officials within Charles’ government, with the Bishop of London, William Juxon, appointed as Lord Treasurer and First Lord of the Admiralty in 1636. Additionally, despite already having the full backing of the Crown, Laud was not one to accept dissent or criticism and, although the severity of his actions has been exaggerated by recent historians, they can be identified as being ruthless at times. The clearest example would be the torture and imprisonment of his most vocal critics in 1637: the religious radicals William Prynne, Henry Burton and John Bastwick.

However successful Laudian reforms may have been in England (and that statement is very much debatable), Laud’s attempt to enforce uniformity on the Church of Scotland in the latter half of the 1630s would see the emergence of a united Scottish opposition against Charles, and eventually armed conflict with the King, in the form of the Bishops’ Wars (1639 and 1640). This road to war was sparked by Charles’ introduction of a new Prayer Book in 1637, aimed at making English and Scottish religious practices more similar – this would prove beyond disastrous. Riots broke out across Edinburgh, the most notable being in St Giles’ Cathedral (where the bishop had to protect himself by pointing loaded pistols at the furious congregation. This displeasure culminated in the National Covenant in 1638 – a declaration of allegiance which bound together Scottish nationalism with the Calvinist faith.

Attempting to draw conclusions about Laudian religious reforms very many hinges on the fact that, in terms of his and Charles’ objectives, they very much overhauled the Calvinist systems of worship, the role of priests, and Church government, and the physical appearance of churches. The response from the public, however, ranging from silent resentment to full-scale war, displays how damaging these reforms were to Charles’ relationship with his subjects – coupled with the influence wielded by his wife Henrietta Maria, public fears about Catholicism very much damaged Charles’ image, and meant religion during the Personal Rule was arguably the most intense issue of the period. In judging Laud in the modern-day, the historical debate has been split: certain historians focus on his radical uprooting of the established system, with Patrick Collinson suggesting the Archbishop to have been “the greatest calamity ever visited upon by the Church of England”, whereas others view Laud and Charles as pursuing the entirely reasonable, a more orderly and uniform church.

Much like how the Personal Rule’s religious direction was very much defined by one individual, so was its political one, by Thomas Wentworth, later known as the Earl of Strafford. Serving as the Lord Deputy of Ireland from 1632 to 1640, he set out with the aims of ‘civilising’ the Irish population, increasing revenue for the Crown, and challenging Irish titles to land – all under the umbrella term of ‘Thorough’, which aspired to concentrate power, crackdown on oppositions figures, and essentially preserve the absolutist nature of Charles’ rule during the 1630s.

Regarding Wentworth’s aims toward Irish Catholics, Ian Gentles’ 2007 work The English Revolution and the Wars in the Three Kingdoms argues the friendships Wentworth maintained with Laud and also with John Bramhall, the Bishop of Derry, “were a sign of his determination to Protestantize and Anglicize Ireland”.Devoted to a Catholic crackdown as soon as he reached the shores, Wentworth would subsequently refuse to recognise the legitimacy of Catholic officeholders in 1634, and managed to reduce Catholic representation in Ireland’s Parliament, by a third between 1634 and 1640 – this, at a time where Catholics made up 90% of the country’s population. An even clearer indication of Wentworth’s hostility to Catholicism was his aggressive policy of land confiscation. Challenging Catholic property rights in Galway, Kilkenny and other counties, Wentworth would bully juries into returning a King-favourable verdict, and even those Catholics who were granted their land back (albeit only three-quarters), were now required to make regular payments to the Crown. Wentworth’s enforcing of Charles’ religious priorities was further evidenced by his reaction to those in Ireland who signed the National Covenant. The accused were hauled before the Court of Castle Chamber (Ireland’s equivalent to the Star Chamber) and forced to renounce ‘their abominable Covenant’ as ‘seditious and traitorous’.

Seemingly in keeping with figures from the Personal Rule, Wentworth was notably tyrannical in his governing style. Sir Piers Crosby and Lord Esmonde were convicted by the Court of Castle Chamber for libel for accusing Wentworth of being involved in the death of Esmond’s relative, and Lord Valentina was sentenced to death for “mutiny” – in fact, he’d merely insulted the Earl.

In considering Wentworth as a political figure, it is very easy to view him as merely another tyrannical brute, carrying out the orders of his King. Indeed, his time as Charles’ personal advisor (1639 onwards) certainly supports this view: he once told Charles that he was “loose and absolved from all rules of government” and was quick to advocate war with the Scots. However, Wentworth also saw great successes during his time in Ireland; he raised Crown revenue substantially by taking back Church lands and purged the Irish Sea of pirates. Fundamentally, by the time of his execution in May 1641, Wentworth possessed a reputation amongst Parliamentarians very much like that of the Duke of Buckingham; both men came to wield tremendous influence over Charles, as well as great offices and positions.

In the areas considered thus far, it appears opposition to the Personal Rule to have been a rare occurrence, especially in any organised or effective form. Indeed, Durston claims the decade of the 1630s to have seen “few overt signs of domestic conflict or crisis”, viewing the period as altogether stable and prosperous. However, whilst certainly limited, the small amount of resistance can be viewed as representing a far more widespread feeling of resentment amongst the English populace. Whilst many actions received little pushback from the masses, the gentry, much of whom were becoming increasingly disaffected with the Personal Rule’s direction, gathered in opposition. Most notably, John Pym, the Earl of Warwick, and other figures, collaborated with the Scots to launch a dissident propaganda campaign criticising the King, as well as encouraging local opposition (which saw some success, such as the mobilisation of the Yorkshire militia). Charles’ effective use of the Star Chamber, however, ensured opponents were swiftly dealt with, usually those who presented vocal opposition to royal decisions.

The historiographical debate surrounding the Personal Rule, and the Caroline Era more broadly, was and continues to be dominated by Whig historians, who view Charles as foolish, malicious, and power-hungry, and his rule without Parliament as destabilising, tyrannical and a threat to the people of England. A key proponent of this view is S.R. Gardiner who, believing the King to have been ‘duplicitous and delusional’, coined an alternative term to ‘Personal Rule’ – the Eleven Years’ Tyranny. This position has survived into the latter half of the 20th Century, with Charles having been labelled by Barry Coward as “the most incompetent monarch of England since Henry VI”, and by Ronald Hutton, as “the worst king we have had since the Middle Ages”.

Recent decades have seen, however, the attempted rehabilitation of Charles’ image by Revisionist historians, the most well-known, as well as most controversial, being Kevin Sharpe. Responsible for the landmark study of the period, The Personal Rule of Charles I, published in 1992, Sharpe came to be Charles’ most staunch modern defender. In his view, the 1630s, far from a period of tyrannical oppression and public rebellion, were a decade of “peace and reformation”. During Charles’ time as an absolute monarch, his lack of Parliamentary limits and regulations allowed him to achieve a great deal: Ship Money saw the Navy’s numbers strengthened, Laudian reforms mean a more ordered and regulated national church, and Wentworth dramatically raised Irish revenue for the Crown – all this, and much more, without any real organised or overt opposition figures or movements.

Understandably, the Sharpian view has received significant pushback, primarily for taking an overly optimistic view and selectively mentioning the Personal Rule’s positives. Encapsulating this criticism, David Smith wrote in 1998 that Sharpe’s “massively researched and beautifully sustained panorama of England during the 1630s … almost certainly underestimates the level of latent tension that existed by the end of the decade”.This has been built on by figures like Esther Cope: “while few explicitly challenged the government of Charles I on constitutional grounds, a greater number had experiences that made them anxious about the security of their heritage”.

It is worth noting however that, a year before his death in 2011, Sharpe came to consider the views of his fellow historians, acknowledging Charles’ lack of political understanding to have endangered the monarchy, and that, more seriously by the end of the 1630s, the Personal Rule was indeed facing mounting and undeniable criticism, from both Charles’ court and the public.

Sharpe’s unpopular perspective has been built upon by other historians, such as Mark Kishlansky. Publishing Charles I: An Abbreviated Life in 2014, Kishlansky viewed parliamentarian propaganda of the 1640s, as well as a consistent smear from historians over the centuries as having resulted in Charles being viewed “as an idiot at best and a tyrant at worst”, labelling him as “the most despised monarch in Britain’s historical memory”. Charles however, faced no real preparation for the throne – it was always his older brother Henry that was the heir apparent. Additionally, once King, Charles’ Parliaments were stubborn and uncooperative – by refusing to provide him with the necessary funding, for example, they forced Charles to enact the Forced Loan. Kishlansky does, however, concede the damage caused by Charles’ unmoving belief in the Divine Right of Kings: “he banked too heavily on the sheer force of majesty”.

Charles’ personality, ideology and early life fundamentally meant an icy relationship with Parliament, which grew into mutual distrust and the eventual dissolution. Fundamentally, the period of Personal Rule remains a highly debated topic within academic circles, with the recent arrival of Revisionism posing a challenge to the long-established negative view of the Caroline Era. Whether or not the King’s financial, religious, and political actions were met with a discontented populace or outright opposition, it remains the case that the identity crisis facing the period, that between tyranny or stability remains yet to be conclusively put to rest.

Post Views: 438 -

The Obsession with News

In 1980, Ted Turner and Reese Schonfeld co-founded the Cable News Network (CNN). Despite derision over the idea of a 24 hour rolling news channel, CNN became a massive hit and would become the forefather to the news system today. In the 43 years since CNN first aired, news channels have changed from having bulletins every few hours to being on air 24/7. Our parents would have to wait for the top of the hour for news, unless breaking news broke into programming, whilst we can just turn it on with a press of a button.

Whilst many may marvel at the idea of 24 hour news, it is part of why news today has its problems. As a result of constant media absorption, competition from social media and the internet, as well as a fast-paced world, society itself has become obsessed with the news. Every tiny little story becomes splashed across screens, both large and small, in a desperate attempt to capture the moment before it vanishes.

Everything is Breaking News

If, like me, you have the BBC news app alert on your phone, then this will be a similar tale. The alert goes off. You check it. Whilst it’s officially classed as ‘Breaking News,’ it’s not really that important. Some things are of course important. Look at the death of Her Majesty The Queen last year. That was a news story that knocked everything else off the air. Considering that she had been our monarch since 1952, it’s fair to say that this was incredibly important breaking news.

Generally, the app applies the term ‘Breaking News’ rather liberally. Holly Willoughby leaving This Morning after fourteen years is not worth your phone going off. Beyoncé removing ‘offensive lyrics’ from an old song isn’t worth it either.

That also applies to news channels. Sky News and BBC will have that ticket going across the bottom of the screen quite happily for just about any reason. Rare is the day where the bottom of Sky News is not a flash of yellow and black. Even a slow news day will have breaking news just to keep things a bit fresh.

It’s understandable really. In this day and age, news travels fast. It comes and goes in the blink of the eyes. News companies want to have their hold on the story before the next one comes. When Twitter/X or Facebook gets the news first, well, that’s one less story that they’ve managed to break to viewers. The big media organisations may have the means to research the stories and get the scoops, but they don’t ever get it out first. One is more likely to find out a story through social media than they are the 24 hour news or their app.

Considering the point of the 24 hour news cycle is to be fresh, that’s not really a good thing.

Every Little Story, Made Bigger

On the 18th April 1930, BBC news would announce that “there is no news.”

Can you imagine that today? Another issue with the 24 hour cycle and news today is the fact that there’s a desperation to find something to report on. When channels and apps are never off, they can’t have a rest. Something must be going on. It doesn’t matter what it is, but it must be something.

Perhaps it’s a take on a news story through the issue of race, gender or sexuality. Perhaps it’s a random study from Australia. Whatever it is, it’s got a place in the news because it’s something.

Take for example the Daily Climate Show on Sky News. What was originally a daily, thirty minute slot on prime time was axed to a weekend event. It’s not hard to see why this was. In its desperation to make more news out of something, Sky took a risk by devoting half an hour everyday to the exact same topic. Considering how climate change and its presentation is a divisive subject, it was hardly a risk worth taking. Changing it to every weekend was still a poorly thought out move.

Repetition

You might turn the news on when you get up at seven in the morning. You might turn the news on at ten before you go to bed. What might link those two viewings is that they are exactly the same.

When the media can’t slot a new story in, they’ll just repeat it. If it’s an unfolding story, then of course you’ll see it or read about it again later because there are news things to be said. The problem occurs when it’s the same story over and over again.

Nobody wants to hear the same story they did fifteen hours ago without new information. It’s tiresome.

The Fear Factor

Then there’s the fear in which the media thrives.

From the moment that Boris Johnson told us that we now had to stay in our homes because of COVID, the media was all over the pandemic- perhaps even before then. With nothing else happening because everyone was locked down, all the media could do was run constant stories about the ever climbing death toll. At first, well, it was what we expected. Then it started to get a bit repetitive.

These stories tend to get a much frostier reception if reported today. Commentators scold the media for trying to scare us or create fear.

They could, however, get away with it during those early months. With nothing else to do, we had more time for the news. Their stories were constantly about the deaths and after effects of COVID. We were already unable to leave our homes and live our daily lives, with constant mask wearing when we went out, so did we need to be intimidated even more?

It’s not just COVID. Look at the climate protestors, especially the young ones, when interviewed. Some of them cry in fear for their future, weeping about the thought of a planet that could be gone when they have reached adulthood. Considering the constant doomsday coverage of climate change in the news, it’s easy to see where this fear comes from. Kids’ news shows like Sky’s awful FYI focus on the topic regularly. It’s constantly on mainstream news.

Children are more in tune with the world today. With all the darkness in the news and on social media, some will blame it for the declining mental health we are seeing in young people. Indeed, where is the hope? Well, people don’t watch the news to hear about new innovations or cute animals being born in zoos. Fear is more gripping than hope, and a bigger seller too, but it’s not good for morale.

It’s vitally important that we know what’s going on in the world, but too much news is bad for the soul. In a world where it’s all too accessible and the media makes money on constant news, we can’t rely on it for real information. We’re either fed fear or repetitiveness. The obsession with news is, ironically, making us less knowledgeable. Resist the urge to keep up behind what is needed. It’s better for you.

Post Views: 353 -

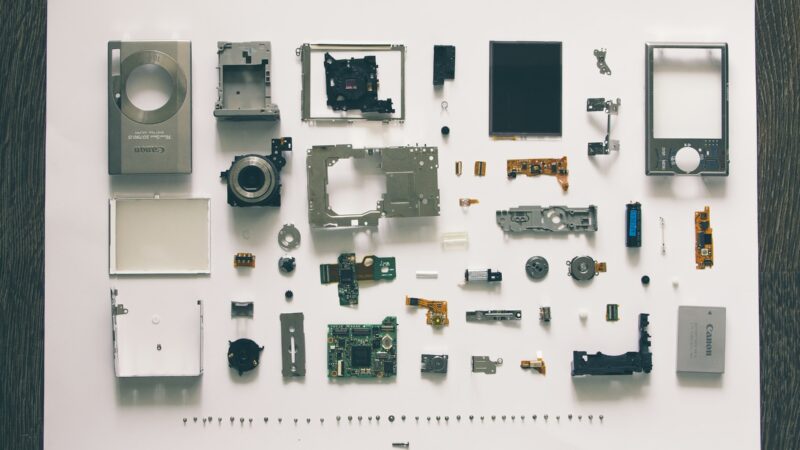

Liberalism and Planned Obsolescence

Virtually everyone at some point has complained about how their supposedly state-of-the-art phone, tablet, laptop, or computer doesn’t seem quite so cutting-edge when it either refuses to work properly or ceases to function entirely after a disappointingly brief period of time. This is not merely the grumblings of aggravated customers, but a consequence of “planned obsolescence.” The term dates back to the Great Depression, coined by Bernard London in his 1932 paper Ending the Depression Through Planned Obsolescence, but a practically concise definition comes courtesy of Jeremy Bulow as “the production of goods with uneconomically short useful lives so that customers will have to make repeat purchases.” Despite being an acknowledged (and in some cases encouraged) practice, it is still condemned; both Apple and Samsung have faced legal action on multiple occasions for introducing software updates which actively hinder the performance of older devices. In the face of all this, planned obsolescence isn’t going anywhere so long as there is technology, nor does anyone expect it to. It is, as death and taxes are, one of the few certainties of life.

As the title of this essay suggests, I do not intend to delve any further into the technological or economic ethics of planned obsolescence. Interesting as they may be, I want to focus on how the concept appears in a political context; more specifically, in liberalism.

One of the core tenets of liberalism is a belief in the “Whig interpretation of history.” In his critique of the approach, aptly titled The Whig Interpretation of History, Herbert Butterfield outlined the Whig disposition as being liable to “praise revolutions provided they have been successful, to emphasize certain principles of progress in the past and to produce a story which is the ratification if not the glorification of the present.” To boil it down, it is the belief that history is a continuous march of progress, with each successive step freer and more enlightened than the last. A Whiggish liberal is dangerously optimistic in their opinion that history has led to the present being the greatest social, economic, and political circumstances one could hitherto be born into. More dangerous still is their restlessness, for as good as the present may be, it cannot rest on its laurels and must make haste in progressing even further such that the future will be even better. The pinnacle of human development lasts as long as a microwave cooking a spoon, receiving for its valiant effort little other than sparks, fire, and irreparable damage resulting in its subsequent replacement.

The unrepentant Whiggery of the modern world has prompted scholars of the Traditionalist School of philosophy to label it an aberration amongst all other societies, as the first which does not assign any inherent value to, or more accurately, openly detests, perennial wisdom (timeless knowledge passed down through generations) and abstract metaphysical truths. In the words of René Guénon, “the most conspicuous feature of the modern period [is its] need for ceaseless agitation, for unending change, and for ever-increasing speed.” Quite literally, nothing is sacred. One of the primary causes of this is that modernity, defined by its liberalism, is materialist, and believes that anything and everything can and should be explained rationally and scientifically within the physical world. The immaterial and the spiritual are disregarded as irrational, outmoded and unjustifiable; it is, as Max Weber says, “disenchanted.”

To understand this further, we must consider Plato’s conceptions of the two distinct natures of the spiritual and the material/physical world, “being” and “becoming” respectively. Being is constant and axiomatic, characterised by abstract ideas, timeless truths and stability. Becoming on the other hand, as the nature of the physical world, reflects the malleability of its inhabitants and exists in an endless state of flux. Consider your first car, it will alter with time, the bodywork might rust and you may need new parts for it, and indeed it may eventually be handed on to a new owner or even scrapped entirely. Regardless of what changes physically, its first car status can never be separated from it, not even when you no longer own it or it’s recycled into a fridge, for it will always hold a metaphysical character on a plane beyond the material.

Julius Evola, another Traditionalist scholar, succinctly defined a Traditional society as one where the “inferior realm” of becoming is subservient to the “superior realm” of being, such that the inherent instability of the former is tempered by orientation to a higher spiritual purpose through deference to the latter. A society of liberalism is unsurprisingly not Traditional, lacking any interest in the principles of being, and is instead an unconstrained force of pure becoming. Perhaps rather than disinterest, we can more accurately characterise the liberal disposition towards being as hostile. After all, it constitutes the “customs” which one of classical liberalism’s greatest philosophers, John Stuart Mill, regarded as “despotic” and a “hindrance to human development.” Anything which is perennial, traditional, or spiritual is deleterious to the march of progress unless it can either justify its existence within the narrow rubric of liberal rationalism, or abandon its traditional reference points and serve new masters. With this mindset, your first car doesn’t represent anything to do with the sense of both liberation and responsibility that comes with being able to transport yourself, it is simply a lump of metal to tide you over until you can get a more expensive lump of metal.

Of course, I do not advocate keeping a car until it falls to pieces, it is simply a metaphor for considering the abstract significance of things which may be obscured by their physical characteristics. In the real world, the stakes are much higher, where we aren’t just talking about old cars but long-standing cultural structures, community values and particularisms, and other such social authorities that fall victim to the ravenous hunger of liberal progressivism.

The consequence of this, as with all things telluric, material, or designed by human effort, is impermanence. Without reference to and deliberate denigration of being, ideas, concepts and structures formed within the liberal system have no permanent meaning; they are as fickle as the humans who constructed them. Roger Scruton eloquently surmised this conundrum when lambasting what he called the “religion of Rights”, whereby human rights, or indeed any concepts of becoming (without spiritual reference, or to being) are defined by subjective “moral opinions” and “legal precepts.” Indeed “if you ask what rights are human or fundamental you get a different answer depending whom you ask.” I would further add the proviso of when you ask, as a liberal of any given period appears to their successors as at best outdated or at worst reactionary. Plucking a liberal from 1961, 1981, 2001, and 2021, and sitting them around a table to discuss their beliefs would result in very little agreement. They may concur on non-descript notions of “freedom” and “equality”, but they would struggle to find congregate over a common understanding of them.

To surmise, any idea, concept or structure that exists within or is a product of liberalism is innately short-lived, as the ceaseless agitation of becoming necessitates its destruction in order to maintain the pace of the march of progress. But Actual people, regardless of how progressive or rational they claim to be, rarely keep up with this speed. They tend to follow Robert Conquest’s first law of politics: “everyone is conservative about what he knows best.” People are naturally defensive of the familiar; just as an aging iPhone slows down with time or when there’s a new update it can’t quite cope with, so too will liberals who fail to adapt to changing circumstances. Sadly for them, the progressive thirst of liberalism requires constant refreshment of eager foot-soldiers if its current flock cannot keep up, unafraid to put down any fallen comrades if they prove a liability, no matter how loyal or consequential they may have once been. Less, as Isaac Newton famously wrote, “standing on the shoulders of giants”, more “relentlessly slaying giants and standing on a pile of their fallen corpses”, which as far as I’m aware no one would ever outright admit to.

You don’t have to look particularly far to find recent examples of this. In the 1960s and 70s, John Cleese pioneered antinomian satire such as Monty Python and Fawlty Towers, specifically mocking religious and British sensibilities. Now, in response to his assertion that cultural and ethnic changes have rendered London “no longer English”, he is derided for being stuffy and racist. Indeed, Ken Livingstone, Boris Johnson, and Sadiq Khan, the three progressive men (in their own unique ways) who have served as Mayor of London since its establishment in 2000, lined up on separate occasions to attack Cleese, with Khan suggesting that the comments made him “sound like he’s in character as Basil Fawlty.” There is certainly a poetic irony in becoming the very thing you once satirised, or perhaps elegiac for the liberals who dug their own graves by tearing down the system, only to become the system and therefore a target of that same abuse at the hands of others.

Another example is George Galloway, a staunch socialist, pro-Palestinian, and unbending opponent of capitalism, war, and Tony Blair. Since 2016 however, he has come under fire from fellow leftists for supporting Brexit (notably, something that was their domain in the halcyon days of Tony Benn, Michael Foot, and Peter Shore) and for attacking woke liberal politics. Other fallen progressives include J. K. Rowling and Germaine Greer, feminists who went “full Karen” by virtue of being TERFs, and Richard Dawkins, one of New Atheism’s four horsemen, who was stripped of his Humanist of the Year award for similar anti-Trans sentiments. All of these people are progressives, either of the liberal or socialist variety, the difference matters little, but their fall from grace in the eyes of their fellow progressives demonstrates the inevitable obsolescence innate to their belief system. How long will it be until the fully updated progressives of 2021 are replaced by a newer model?

On a broader scale, we can think of it in terms of generational divides regarding social attitudes, where the boomers and Generation X are often characterised as the conservatives pitted against the liberal millennials and Generation Z. Yet during the childhood of the boomers, the United Nations was established and adopted the Universal Declaration of Human Rights, and when they hit adolescence and early adulthood the sexual revolution had begun, with birth control widespread and both homosexuality and abortion legalised. Generation X culture emerged when all this was fully formed, and rebelled against utopian boomer ideals and values in the shape of punk rock, the New Romantics, and mass consumerism. If the boomers were, and still are, ceaselessly optimistic, Generation X on the other hand are tiringly cynical. This trend predictably continued, millennials rebelled against Generation X and Generation Z rebelled against millennials. All of them had their progressive shibboleths, and all of them were made obsolete by their successors. To a liberal Gen Zer in 2021, it seems unthinkable that will one day be the crusty boomer, but Generation Alpha will no doubt disagree.

Since 2010, Apple’s revolutionary iPad has had 21 models, but the current could only look on in awe at the sheer number of different versions of progressive which have been churned out since the age of Enlightenment. As an object, the iPad has no choice in the matter. Tech moves fast, and its creators build it with the full knowledge it will be supplanted as the zenith of Apple’s capabilities within two years or less. The progressives on the other hand are inadvertently supportive of their inevitable obsolescence. Just as they were eager not to let the supremacy of their ancestors’ ideas linger for too long, lest the insatiable agitation of Whiggery be halted for a moment, their successors hold an identical opinion of them. Their imperfect human sluggishness will leave them consigned to the dustbin of history, piled in with both the traditionalism they so detested as well as the triumphs of liberalism that didn’t quite get with the times once they were accepted as given. Like Ozymandias, who stood tall over the domain of his glory, they too are consigned to a slow burial courtesy of the sands of time.

As much as planned obsolescence is a regrettable part of modern technology, so too is it an inescapable component of liberalism. Any idea, concept, or structure can only last for a given time period before it is torn down or has its nature drastically altered beyond recognition to stop it forming into a new despotic custom. Without reference to being, the world and its products are left purely in the hands of mankind. Defined by caprice, “freedom”, “equality”, or “democracy” can be given just as quickly as they can be taken, with little justification required other than the existing definition requiring amendment. Who decides the new meaning? And what happens to those who defend the existing one? Irrelevant, for one day both will be relics, and so too shall the ones that follow it. What happens when there is no more progress to be made? Impossible to say for certain, but if we are to take example from nature, a tornado once dissipated leaves behind only eerie silence and a trail of destruction, from which the only answer is to rebuild.

Post Views: 753